|

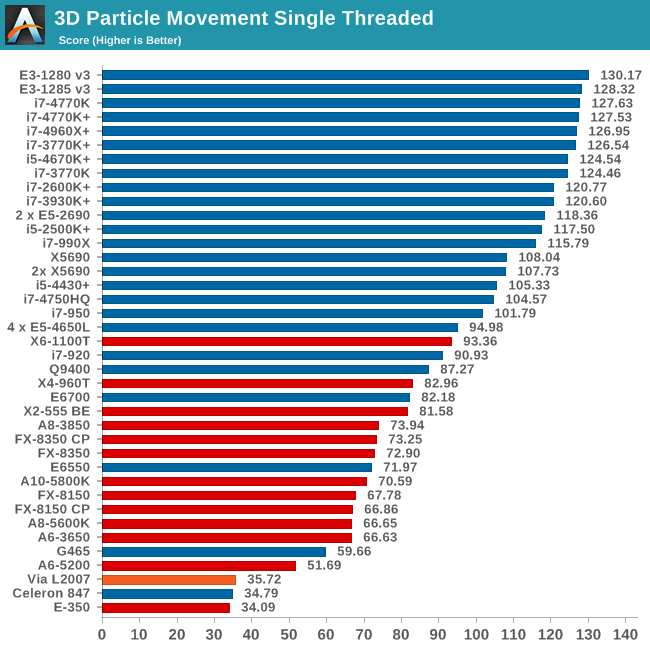

Nevertheless, such rate will NEVER give us a proper correlation to actual customer experience. Benchmark programs normally work in a controlled environment, so they can be manipulated to get a desired rate-the highest possible. Simple testing with benchmark software does not comprise a full performance analysis. This rate can be quantified including the 5-year capital cost of the equipment. There is another thing when comparing various systems with the intention of buying them: the price/performance rate. Generally a benchmark will highlight the aspect that is optimized for, at the expense of other aspects that are not being measured (and could be important for the customer). Such behavior will offer fake results that could bring lack of confidence in the brand or the provider. He also encourages resisting the temptation to optimize for the benchmark with the goal of win the contest at any price. Smaalders invites to choose benchmarks that really represent the customer needs or all the efforts will end up optimizing for the wrong behavior. Not all benchmarks selected will meet all of these criteria, but it is important that some of them do. If it takes days to get performance results, it will not happen very often. Runnable, so that all developers can quickly ascertain the effects of their changes.Realistic, so that measurements reflect customer-experienced realities.Easily presented, so that everyone can understand the comparisons in a brief presentation.Maintaining a history of the performance of previous releases is a valuable aid to understanding your own development process. Portable, so that comparisons are possible with your main competitors (even if they are your own previous releases).Nothing is more frustrating than a complex benchmark that delivers a single number, leaving the developer with no additional information as to where the problem might lie.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed